Web application client vs server side processing

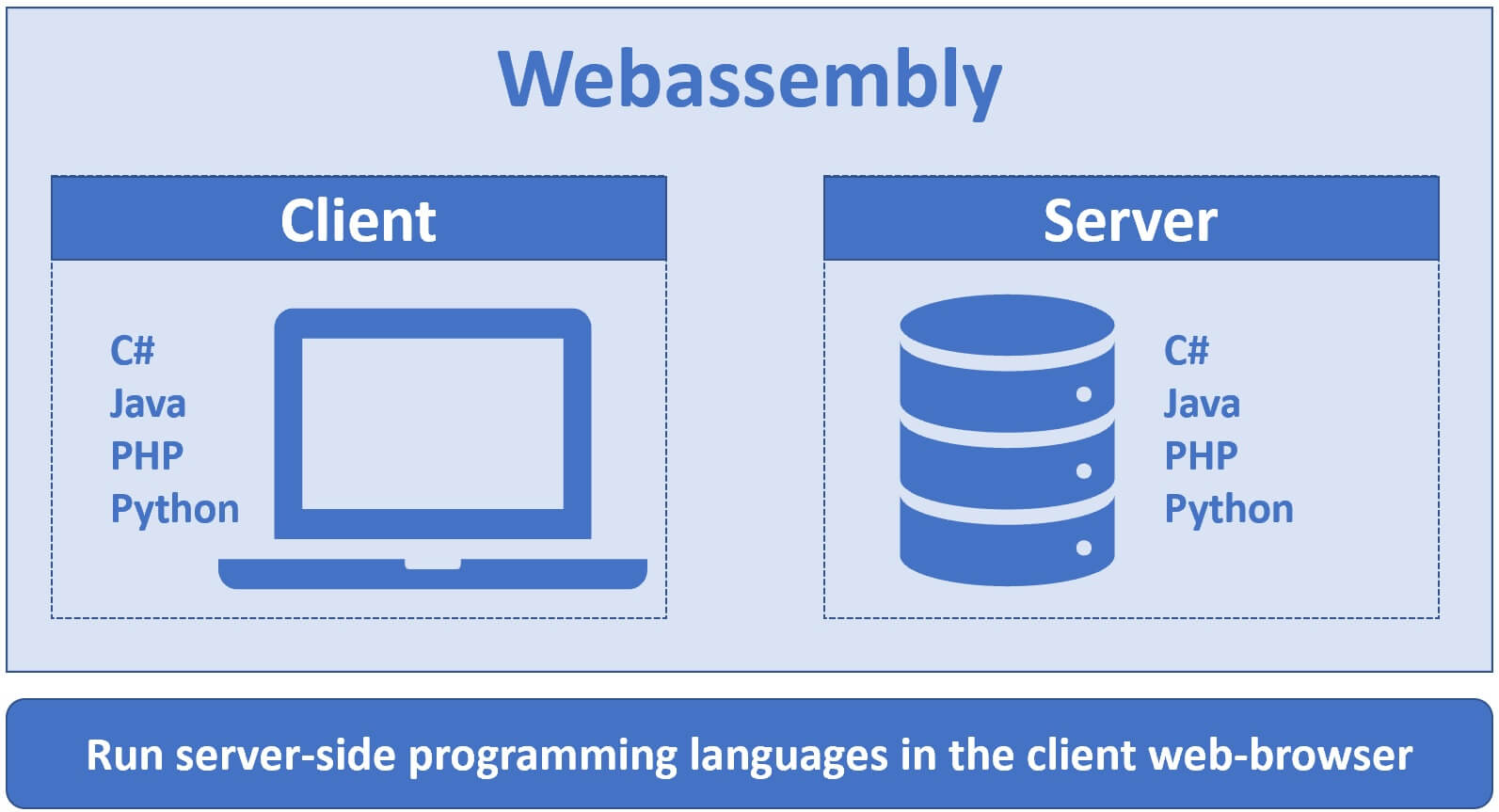

With the arrival of WebAssembly we can now run server-side programming on the client machine in the web-browser. Any server-sdie programming language really, C#, Java, PHP, Python etc.

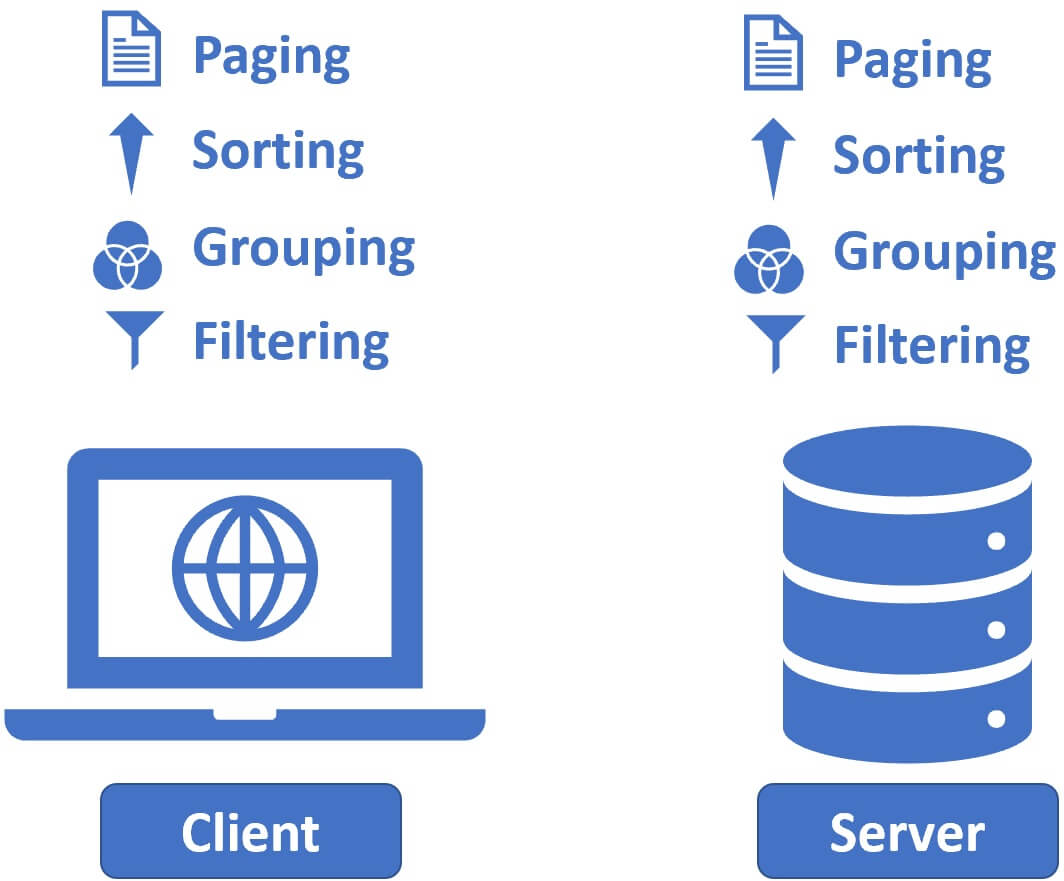

So the question is, if we are building a data-driven web application should we perform operations on the data like paging, sorting, grouping, filtering etc on the client machine or on the server?

Well, each approach has its pros and cons. Which approach is better depends on several factors like

- The volume of data i.e how many data rows you have.

- Do you know how powerful your client machines are and their processing capabilities.

- How often these operations i.e paging, sorting, filtering etc are performed.

- Your application architecture etc.

Client side processing

In general, downloading the entire data to the client machine is okay if you have just a few hundered or even a few thousand rows. The benefit of this approach is, no further connection to the server is required. Operations like paging, sorting, grouping, filtering etc are processed completely on the client machine. So basically we are using the processing power of our client machines. This also means the load on the server is reduced and which obviously allows the server to serve more clients.

It's not a good idea to assume your clients always use high end machines to access your application. Your clients may use all sorts of devices - laptops, desktops, tablets, mobile phones any type of device really. If these client machines have less RAM/CPU, I mean less processing power, the application may not perform very well and eventually the end users may stop using your app.

Depending on the client machine processing power, the network speed and the amount of data to download, the initial request may take a very long time.

Server side processing

In general, if you have lots and lots of data, maybe several thousands or millions of rows, it is better to perform processing on the server instead of the client.

For example if paging and sorting are processed on the server, we only need to send a few rows (10, 15, 20 or may be 100). How many rows are sent from the server to the client really depends on the page size. There's no need to download the entire dataset on to the client machine. Request and response cycles are really quick, because there are only a few rows to download from the server. Also, Database engines are much faster than doing the same operations in code on the client machine.

Very common interview question

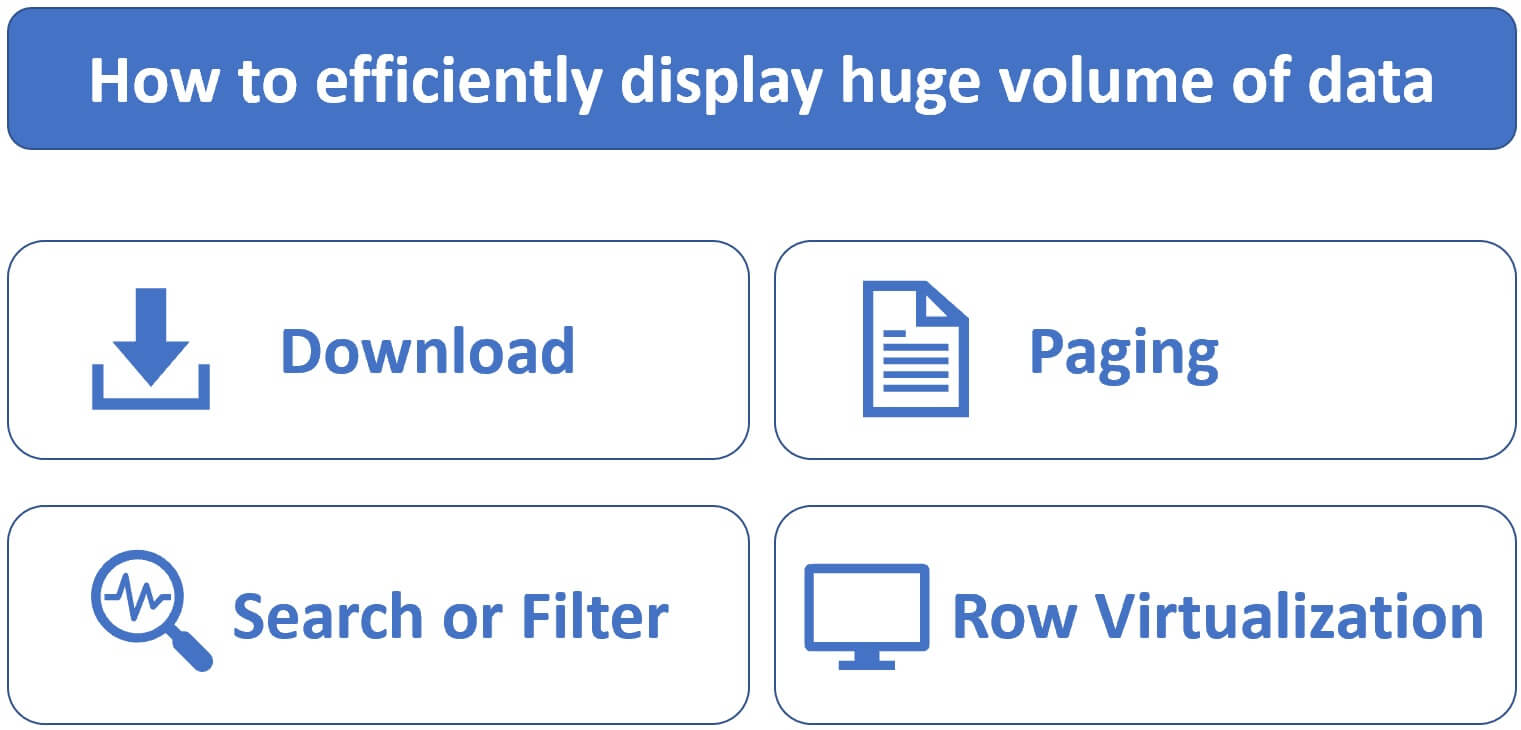

I have over a million rows in a database table and I want to display them on a web page? What approach would you take to efficiently display this huge volume of data?

Displaying the entire dataset of over a million rows in one-go is a very bad idea. It takes too much time to download and may explode both - the browser memory as well as the rendering engine. So, depending on the application requirements and what the users are trying to achieve, there are several approaches to efficiently display huge volumes of data.

Download option

One approach is to provide download option. May be the end user is doing some analysis on the data and he needs the entire data set. Provide a download option instead of rendering the data on the web page. Again, depending on the requirements you may provide different download options, may be download to Excel, CSV, PDF etc.

Paging

Paging is another approach and very useful if the user does not need all the data rows in one-go. Depending on the page size display 10, 50 or 100 rows at a time. The user can click on the page numbers at the bottom to retrieve the next set of rows he wants. It doesn't matter how many rows we have in the database table, since we are retrieving and displaying only a few rows (may be 50 or 100 at a time), performance should not be an issue.

Search or Filter page

Provide a search page so the end user can search, filter and retrieve only the data he is looking for. We can even combine these 3 different approaches - Search, Paging and Download.

Row Virtualization

Row virtualization is another great approach. With this approach only a few rows are initially loaded and displayed. As we scroll-down on the page, rest of the rows are loaded and rendered on-demand. This is the approach used by Facebook.

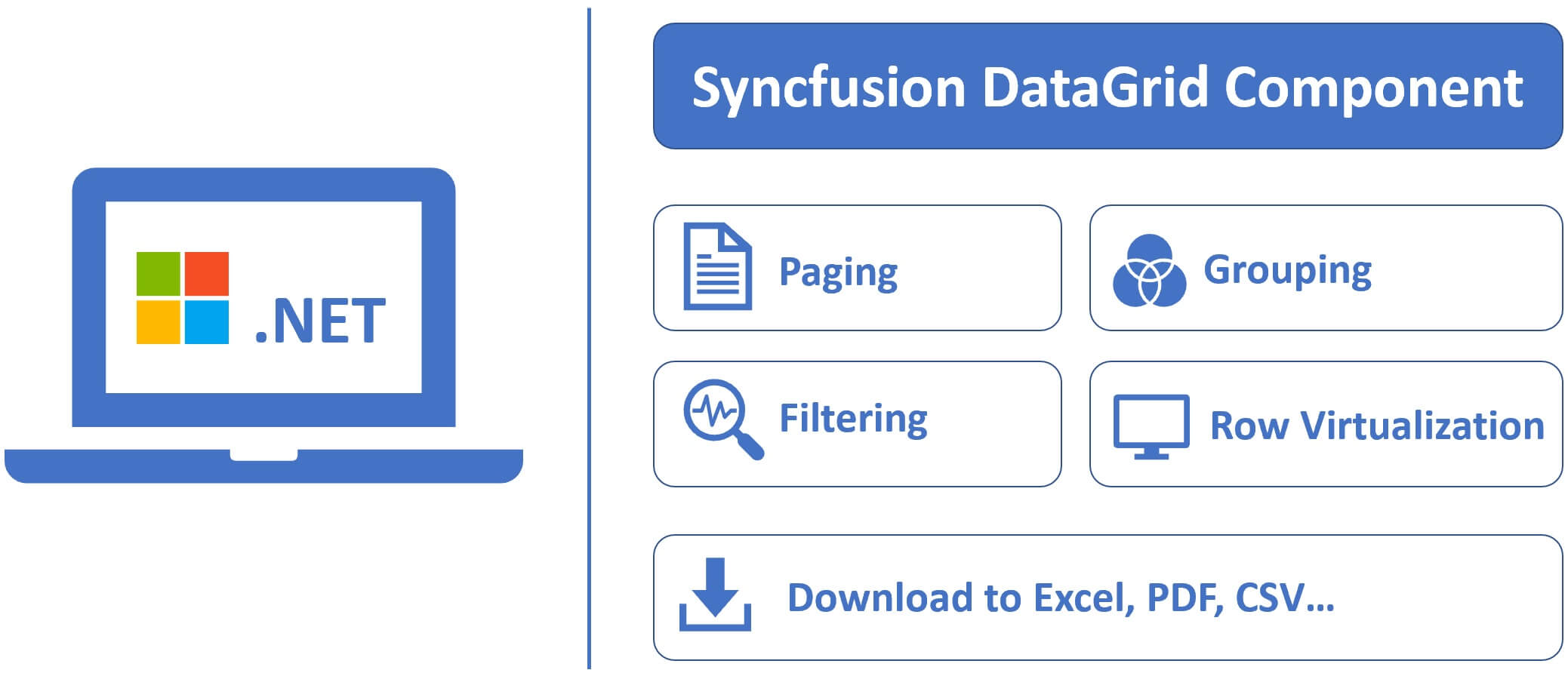

Syncfusion Blazor DataGrid Component

If you are using Microsoft .NET to build an enterprise class data driven web application, then you may consider using Syncfusion DataGrid Component. This component supports all these features

- Paging

- Filtering

- Grouping

- Row Virtualization

- Download to Excel, PDF, CSV and many more

It supports all these features both on the client-side and server-side. It's tested and fine-tuned to work with high volumes of data. So performance should not be an issue. All the Syncfusion components are free to use with their community license. Click here to download the free license.

Part 08 - Client-side paging

Part 09 - Client-side sorting

Part 10 - Server-side paging

© 2020 Pragimtech. All Rights Reserved.